Test Runs

A test case defines the standards, pages and components of a test. And a test run is an 'instance' of that test case. You create a test run after you've set up your test case and just before you start testing.

A test run allows you to perform 'point in time' testing and compare the latest test against an earlier test run. You can track at a page and component level if issues have improved or regressed after a code release.

If you remediate the issues and rerun the test run without creating a new test run, you've replaced the previous test run's issues and lost the ability to compare and track historical changes.

Before you begin: You must first have a Test Case in place and be on the Test Cases screen.

To create a test run:

- On the Test Cases screen, click the Create Test Run button in the Actions column within the row of the test case you want to create a test run for.

-

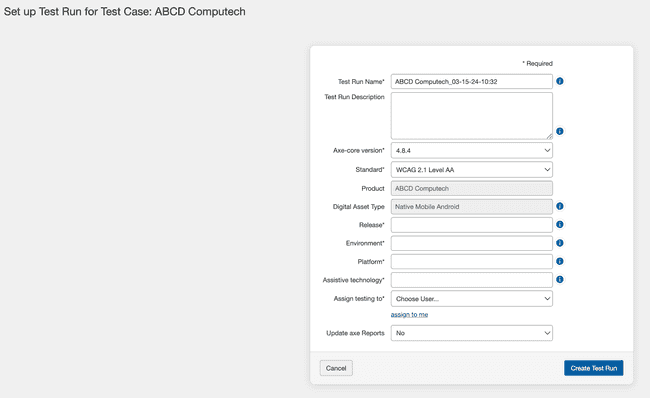

The Set up Test Run for Test Case <Test Case Name> screen appears, displaying a form with nine fields and a Create Test Run button.

Refer to the following descriptions for expected input into each:

-

Test Run Name: This field is pre-populated with the test case name with a timestamp appended, MM_DD_YY-HH:MM. You can change this as needed to better describe the intent of your test run.

-

Test Run Description: Use this field to communicate the details of this test, for example the date it was run, the scope of the test with the pages and sections, the viewport settings used to test and any other details your testers will need.

-

Axe-core version: Use this field to test your test runs with different sets of rules provided by axe-core to compare and validate the issues reported or fixed. The field is pre-populated with the axe-core version that has been selected by the administrator in the Admin Settings page. You can use the dropdown menu to select another axe-core version accessible to you.

-

Standard: This field displays a list of standards. The field is pre-populated with the default standard that has been selected by the administrator in the Admin Settings page. You can use the dropdown menu to choose the most appropriate one. Your selection refines both the automated rules that run and the applicable checkpoint test screens presented for manual testing.

-

Product: This is the name of the product being tested. This field is defined while you are creating the test case, and cannot be edited here during the test run setup.

-

Digital Asset Type: Select from the ten different digital asset types in the dropdown menu to define your assessment. This is an optional field.

The benefit of selecting a digital asset type is that the testing, remediation and the best practice methodologies displayed on the Issue details page will only be relevant to what you've selected when you created the test run. It is useful because when an issue is found against one of the success criteria, the testing methodology and remediation recommendations are filtered to that specific product type and you can quickly get to the relevant information for your test. For example you won't be shown mobile web testing methodology if you've selected desktop web as your product.

If you have selected the digital asset type during the creation of the test case, this field is pre-filled with that selection and cannot be edited.

-

Release: Specify the version number of the product being tested. For example, 1.0 would be the first release cycle of the product.

-

Environment: The environment is the type of server being tested on. For example, a production server would be used for a live site.

-

Platform: The platform is the operating system(s) and browser(s) on which the site is to be tested. For example, 'Windows and Firefox' or 'Android and Chrome.' Automated testing will be run through a connected browser on a given platform.

-

Assistive technology: Assistive technology software and devices are used by disabled individuals to interact with software and websites. Some tests will require use of a screen reader, such as NVDA or JAWS on PC, or VoiceOver on Mac.

-

Assign testing to In this field, click the down arrow to display a list of available users, then click on a user item to select it and populate the field. If you are going to do the testing yourself, click the assign to me link under the field list menu.

-

Update axe reports Select "Yes" or "No" from the dropdown option to determine if the test run data should be updated to axe Reports. This selection is available only if the option during test case creation is set to "Selected Test Runs". If during the test case creation, the option is set to "Every Test Run" or "Never", the Update axe Reports field automatically defaults to "Yes" or "No" respectively. In such a case, users cannot modify this setting.

Note: Only users with axe Reports integration enabled during axe Auditor installation can view this field.

-

-

Click the Create Test Run button at the bottom of the form.

Once you have created a test run, the next step is to start testing a component and/or page. For more information, see Start Testing.